Responsible Use of AI

in Professional Workplaces

In recent years, artificial intelligence (AI) has become an increasingly valuable tool for improving efficiency in modern office environments. Many routine tasks that once required significant time and effort can now be completed quickly with the assistance of AI-powered systems. For example, drafting documents, summarising lengthy materials, preparing reports, searching for information, and generating graphs or data visualisations can often be completed within seconds. These capabilities allow professionals to streamline workflows, improve productivity, and focus more attention on higher-level analytical or strategic work.

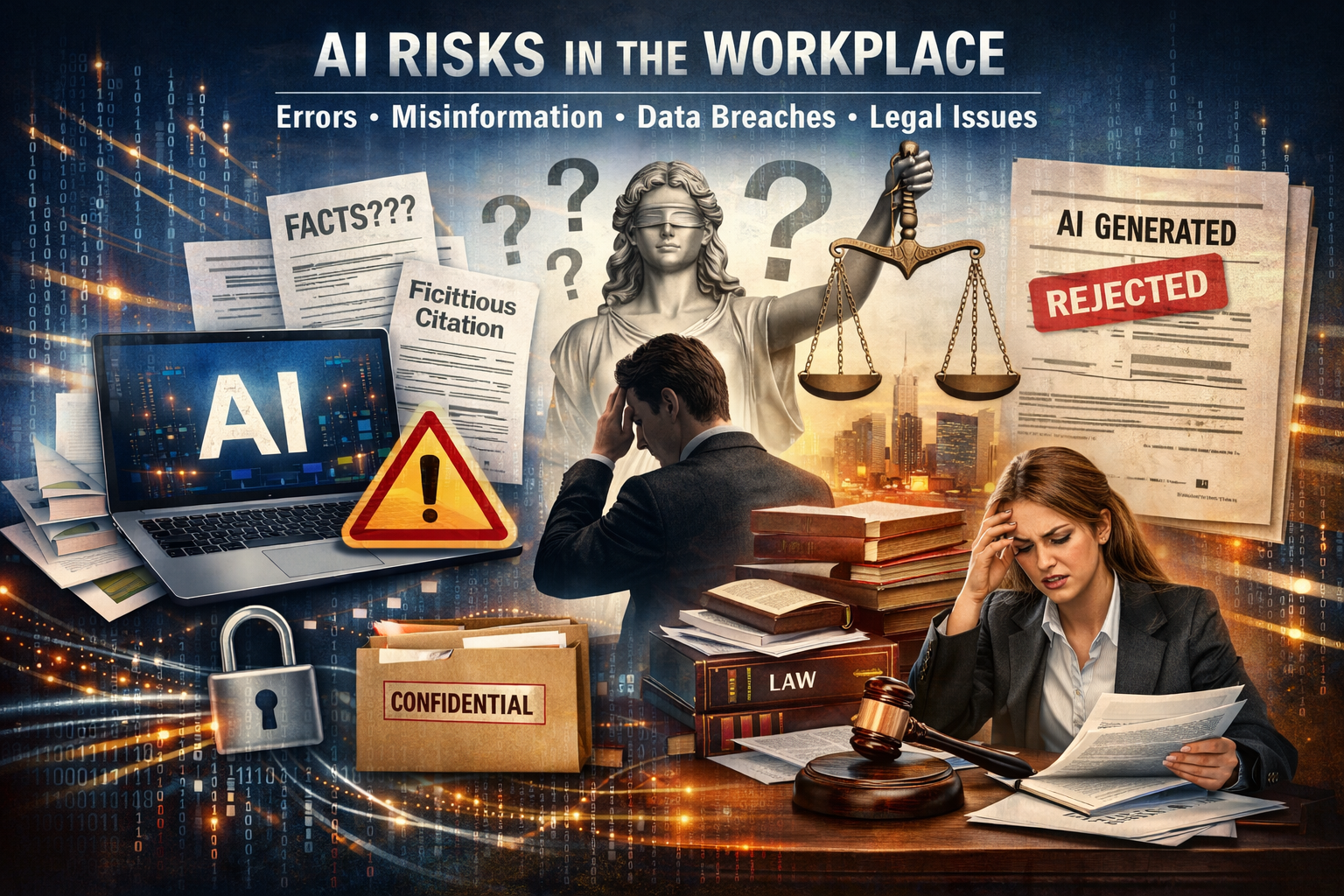

Despite these advantages, an overreliance on AI tools can also introduce significant risks. When users become accustomed to trusting AI-generated outputs without proper verification, errors may occur. In some cases, these inaccuracies may appear minor and have limited impact. However, in other situations the consequences can be serious, particularly when AI-generated content is used in professional, legal, or technical contexts.

One of the fundamental limitations of generative AI systems is that they produce responses based on probabilistic algorithms rather than verified facts. AI models analyse patterns in large datasets and generate responses that appear plausible and coherent, but the information produced is not necessarily fact-checked or validated. As a result, AI outputs may contain biases, outdated information, irrelevant material, or even entirely fabricated references and citations. Because generative AI systems may also learn from user interactions and previously submitted content, inaccuracies or misleading information may inadvertently become part of the broader knowledge pool used to generate future responses.

Recognising these risks, regulatory and legal authorities have begun issuing guidance on the responsible use of generative AI. In Australia, the Supreme Court of New South Wales published "Practice Note SC GEN 23 – Use of Generative Artificial Intelligence" to provide guidance to legal practitioners. The practice note explicitly states that generative AI must not be used to create or draft key evidentiary documents such as affidavits, witness statements, character references, or witness evidence and opinions. Furthermore, the practice note emphasises that generative AI must not be used to alter, strengthen, or rephrase a witness’s evidence once it has been expressed in written form. These restrictions reflect the importance of maintaining the integrity and authenticity of legal evidence.

Careless use of AI may also create significant data security risks. Many users upload sensitive information to AI platforms because these tools are fast, convenient, and appear to simplify complex tasks. However, users may incorrectly assume that the information they provide is private or temporary. In April 2023, an engineer at a major semiconductor manufacturer used a publicly available AI tool to assist with debugging complex code. During the process, the engineer unintentionally uploaded proprietary source code, testing procedures, and confidential engineering notes. Because the platform’s default settings allowed user inputs to be incorporated into training datasets, the information became part of the provider’s internal data pool and could not easily be retrieved or removed. Although no malicious actor was involved, valuable intellectual property was effectively exposed due to a lack of caution.

Real-world legal consequences have also begun to emerge. In late 2025, a tribunal case in Australia involved a contractor who submitted a development or planning application to a local council that had been almost entirely generated by AI without proper fact-checking. The submission contained inaccuracies and unsupported claims, and the contractor was subsequently penalised. In the same year, an Australian lawyer faced disciplinary action after filing court documents that contained citations and quotations generated by AI which were later found to be fictitious. Beyond financial penalties, such incidents can cause lasting reputational damage.

These cases highlight an important lesson: while AI can significantly enhance productivity and capability in the workplace, it must be used responsibly. Human oversight, verification of facts, and professional judgement remain essential to ensure accuracy, maintain confidentiality, and protect both organisational integrity and professional reputation.